In enterprise technology, speed isn't just a nice-to-have — it's often the difference between leading a market and catching up to one. When it comes to deploying AI across a business, MCP servers have quietly become one of the biggest speed unlocks in the industry.

Let's look at why.

Speed at Every Stage of the AI Lifecycle

When people talk about 'speed' in the context of MCP servers, they usually mean one of two things: how fast you can build AI-powered workflows, and how fast those workflows run in production. MCP servers meaningfully improve both, but the bigger story is the first one.

Faster Development

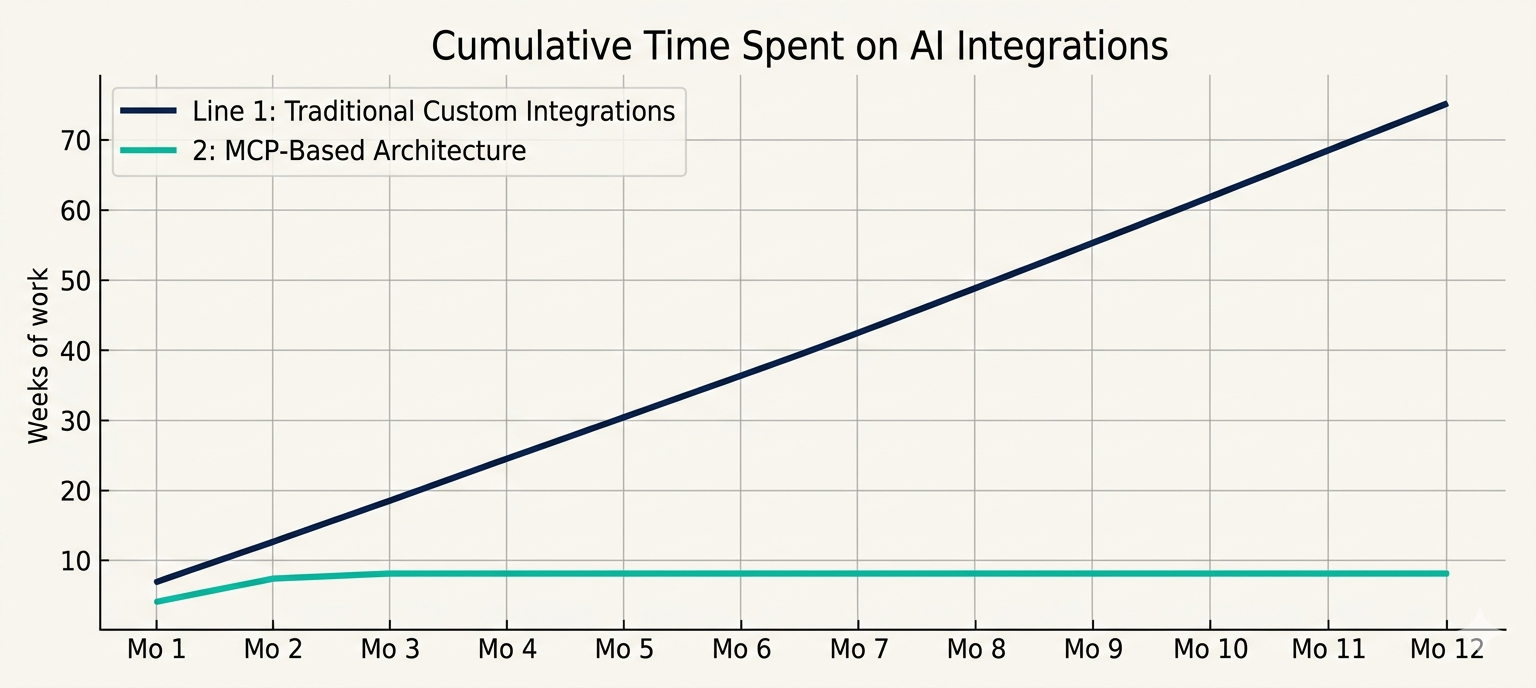

Traditional AI integrations are slow because every system needs a custom connector. Want your AI to read from your CRM? Build a connector. Write to your ticketing system? Build another one. Pull from your warehouse? Another. Each involves auth, error handling, rate limits, schema mapping, and testing. Weeks of work, minimum.

MCP collapses that. Once a tool has an MCP server in front of it, any AI agent — current or future — can use it immediately. The second integration takes a fraction of the time of the first. The tenth takes almost none.

Teams adopting MCP-based architectures frequently report going from months of integration work down to days. That's not incremental. That's a different category.

Faster Iteration

Speed isn't just about the initial build. It's about how quickly you can change things.

With MCP, swapping out a model, adjusting permissions, adding a new data source, or restructuring a workflow doesn't require rewriting the whole system. You change one layer, not all of them. That means ideas can move from 'what if we tried…' to 'shipped' in the same week.

Fast iteration is what separates organizations that experiment with AI from organizations that benefit from AI.

Faster Onboarding

Once you have a handful of MCP servers up and running, onboarding a new team or new workflow becomes almost trivial. The AI already has the tools. The tools already have the permissions. The new team just needs to describe what they want done.

Speed in Production

On the runtime side, MCP servers are designed to be efficient. They're purpose-built to handle AI-to-tool communication with minimal overhead, supporting streaming, parallel tool calls, and lightweight transport protocols.

In practice, this means AI agents using MCP feel responsive, not laggy. For customer-facing workflows — chat support, assisted search, interactive copilots — that responsiveness is the difference between a feature users love and one they abandon.

It also means that complex, multi-step workflows (pull data, cross-reference, update three systems, notify a human) can complete in seconds rather than feeling like batch jobs.

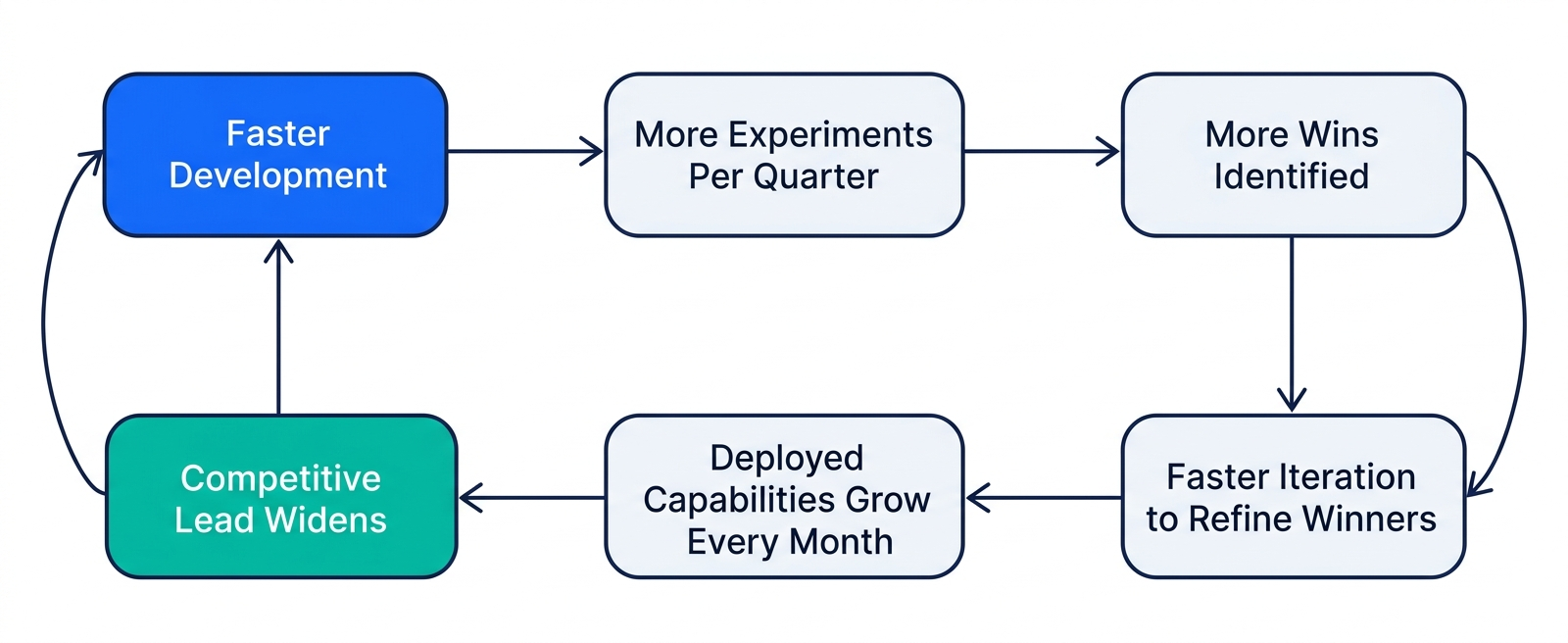

Why Speed Compounds

Here's the insight that's easy to miss: speed isn't just about getting one thing done faster. It's about what becomes possible when everything moves faster.

When new AI capabilities take days instead of months, you can afford to try more things. When iteration is fast, you can course-correct based on real usage instead of upfront guesses. When onboarding is fast, AI spreads organically across the business instead of being bottlenecked by one team.

Organizations winning with AI right now aren't necessarily the ones with the biggest budgets or the most advanced models. They're the ones that have built infrastructure letting them move faster than their competitors. MCP is a big part of that story.

The Speed Gap Is Widening

As MCP matures and more tools natively support it, the gap between MCP-based architectures and traditional custom-integration approaches is only going to grow. Companies that adopt early compound their advantage with every new use case they add. Companies that wait start from zero every time.

If there's ever been a moment where moving sooner pays off, this is it.

Want to Move Faster With AI?

CloudVerve Technologies helps businesses accelerate their AI and automation journey with MCP-driven architectures that cut deployment times dramatically. Our team has helped organizations compress months of integration work into days — and turn AI from a slow experiment into a fast, scalable capability.

Accelerate With CloudVerve